Add e2e tests

This commit is contained in:

parent

99a355f25d

commit

601fb7dacf

1163 changed files with 289217 additions and 14195 deletions

5

vendor/github.com/onsi/ginkgo/.gitignore

generated

vendored

Normal file

5

vendor/github.com/onsi/ginkgo/.gitignore

generated

vendored

Normal file

|

|

@ -0,0 +1,5 @@

|

|||

.DS_Store

|

||||

TODO

|

||||

tmp/**/*

|

||||

*.coverprofile

|

||||

.vscode

|

||||

15

vendor/github.com/onsi/ginkgo/.travis.yml

generated

vendored

Normal file

15

vendor/github.com/onsi/ginkgo/.travis.yml

generated

vendored

Normal file

|

|

@ -0,0 +1,15 @@

|

|||

language: go

|

||||

go:

|

||||

- 1.5.x

|

||||

- 1.6.x

|

||||

- 1.7.x

|

||||

- 1.8.x

|

||||

|

||||

install:

|

||||

- go get -v -t ./...

|

||||

- go get golang.org/x/tools/cmd/cover

|

||||

- go get github.com/onsi/gomega

|

||||

- go install github.com/onsi/ginkgo/ginkgo

|

||||

- export PATH=$PATH:$HOME/gopath/bin

|

||||

|

||||

script: $HOME/gopath/bin/ginkgo -r --randomizeAllSpecs --randomizeSuites --race --trace

|

||||

152

vendor/github.com/onsi/ginkgo/CHANGELOG.md

generated

vendored

Normal file

152

vendor/github.com/onsi/ginkgo/CHANGELOG.md

generated

vendored

Normal file

|

|

@ -0,0 +1,152 @@

|

|||

## 1.4.0 7/16/2017

|

||||

|

||||

- `ginkgo` now provides a hint if you accidentally forget to run `ginkgo bootstrap` to generate a `*_suite_test.go` file that actually invokes the Ginkgo test runner. [#345](https://github.com/onsi/ginkgo/pull/345)

|

||||

- thanks to improvements in `go test -c` `ginkgo` no longer needs to fix Go's compilation output to ensure compilation errors are expressed relative to the CWD. [#357]

|

||||

- `ginkgo watch -watchRegExp=...` allows you to specify a custom regular expression to watch. Only files matching the regular expression are watched for changes (the default is `\.go$`) [#356]

|

||||

- `ginkgo` now always emits compilation output. Previously, only failed compilation output was printed out. [#277]

|

||||

- `ginkgo -requireSuite` now fails the test run if there are `*_test.go` files but `go test` fails to detect any tests. Typically this means you forgot to run `ginkgo bootstrap` to generate a suite file. [#344]

|

||||

- `ginkgo -timeout=DURATION` allows you to adjust the timeout for the entire test suite (default is 24 hours) [#248]

|

||||

|

||||

## 1.3.0 3/28/2017

|

||||

|

||||

Improvements:

|

||||

|

||||

- Significantly improved parallel test distribution. Now instead of pre-sharding test cases across workers (which can result in idle workers and poor test performance) Ginkgo uses a shared queue to keep all workers busy until all tests are complete. This improves test-time performance and consistency.

|

||||

- `Skip(message)` can be used to skip the current test.

|

||||

- Added `extensions/table` - a Ginkgo DSL for [Table Driven Tests](http://onsi.github.io/ginkgo/#table-driven-tests)

|

||||

- Add `GinkgoRandomSeed()` - shorthand for `config.GinkgoConfig.RandomSeed`

|

||||

- Support for retrying flaky tests with `--flakeAttempts`

|

||||

- `ginkgo ./...` now recurses as you'd expect

|

||||

- Added `Specify` a synonym for `It`

|

||||

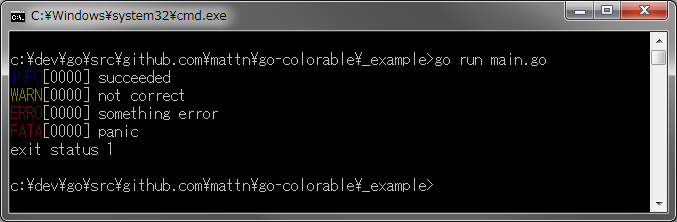

- Support colorise on Windows

|

||||

- Broader support for various go compilation flags in the `ginkgo` CLI

|

||||

|

||||

Bug Fixes:

|

||||

|

||||

- Ginkgo tests now fail when you `panic(nil)` (#167)

|

||||

|

||||

## 1.2.0 5/31/2015

|

||||

|

||||

Improvements

|

||||

|

||||

- `ginkgo -coverpkg` calls down to `go test -coverpkg` (#160)

|

||||

- `ginkgo -afterSuiteHook COMMAND` invokes the passed-in `COMMAND` after a test suite completes (#152)

|

||||

- Relaxed requirement for Go 1.4+. `ginkgo` now works with Go v1.3+ (#166)

|

||||

|

||||

## 1.2.0-beta

|

||||

|

||||

Ginkgo now requires Go 1.4+

|

||||

|

||||

Improvements:

|

||||

|

||||

- Call reporters in reverse order when announcing spec completion -- allows custom reporters to emit output before the default reporter does.

|

||||

- Improved focus behavior. Now, this:

|

||||

|

||||

```golang

|

||||

FDescribe("Some describe", func() {

|

||||

It("A", func() {})

|

||||

|

||||

FIt("B", func() {})

|

||||

})

|

||||

```

|

||||

|

||||

will run `B` but *not* `A`. This tends to be a common usage pattern when in the thick of writing and debugging tests.

|

||||

- When `SIGINT` is received, Ginkgo will emit the contents of the `GinkgoWriter` before running the `AfterSuite`. Useful for debugging stuck tests.

|

||||

- When `--progress` is set, Ginkgo will write test progress (in particular, Ginkgo will say when it is about to run a BeforeEach, AfterEach, It, etc...) to the `GinkgoWriter`. This is useful for debugging stuck tests and tests that generate many logs.

|

||||

- Improved output when an error occurs in a setup or teardown block.

|

||||

- When `--dryRun` is set, Ginkgo will walk the spec tree and emit to its reporter *without* actually running anything. Best paired with `-v` to understand which specs will run in which order.

|

||||

- Add `By` to help document long `It`s. `By` simply writes to the `GinkgoWriter`.

|

||||

- Add support for precompiled tests:

|

||||

- `ginkgo build <path-to-package>` will now compile the package, producing a file named `package.test`

|

||||

- The compiled `package.test` file can be run directly. This runs the tests in series.

|

||||

- To run precompiled tests in parallel, you can run: `ginkgo -p package.test`

|

||||

- Support `bootstrap`ping and `generate`ing [Agouti](http://agouti.org) specs.

|

||||

- `ginkgo generate` and `ginkgo bootstrap` now honor the package name already defined in a given directory

|

||||

- The `ginkgo` CLI ignores `SIGQUIT`. Prevents its stack dump from interlacing with the underlying test suite's stack dump.

|

||||

- The `ginkgo` CLI now compiles tests into a temporary directory instead of the package directory. This necessitates upgrading to Go v1.4+.

|

||||

- `ginkgo -notify` now works on Linux

|

||||

|

||||

Bug Fixes:

|

||||

|

||||

- If --skipPackages is used and all packages are skipped, Ginkgo should exit 0.

|

||||

- Fix tempfile leak when running in parallel

|

||||

- Fix incorrect failure message when a panic occurs during a parallel test run

|

||||

- Fixed an issue where a pending test within a focused context (or a focused test within a pending context) would skip all other tests.

|

||||

- Be more consistent about handling SIGTERM as well as SIGINT

|

||||

- When interupted while concurrently compiling test suites in the background, Ginkgo now cleans up the compiled artifacts.

|

||||

- Fixed a long standing bug where `ginkgo -p` would hang if a process spawned by one of the Ginkgo parallel nodes does not exit. (Hooray!)

|

||||

|

||||

## 1.1.0 (8/2/2014)

|

||||

|

||||

No changes, just dropping the beta.

|

||||

|

||||

## 1.1.0-beta (7/22/2014)

|

||||

New Features:

|

||||

|

||||

- `ginkgo watch` now monitors packages *and their dependencies* for changes. The depth of the dependency tree can be modified with the `-depth` flag.

|

||||

- Test suites with a programmatic focus (`FIt`, `FDescribe`, etc...) exit with non-zero status code, even when they pass. This allows CI systems to detect accidental commits of focused test suites.

|

||||

- `ginkgo -p` runs the testsuite in parallel with an auto-detected number of nodes.

|

||||

- `ginkgo -tags=TAG_LIST` passes a list of tags down to the `go build` command.

|

||||

- `ginkgo --failFast` aborts the test suite after the first failure.

|

||||

- `ginkgo generate file_1 file_2` can take multiple file arguments.

|

||||

- Ginkgo now summarizes any spec failures that occured at the end of the test run.

|

||||

- `ginkgo --randomizeSuites` will run tests *suites* in random order using the generated/passed-in seed.

|

||||

|

||||

Improvements:

|

||||

|

||||

- `ginkgo -skipPackage` now takes a comma-separated list of strings. If the *relative path* to a package matches one of the entries in the comma-separated list, that package is skipped.

|

||||

- `ginkgo --untilItFails` no longer recompiles between attempts.

|

||||

- Ginkgo now panics when a runnable node (`It`, `BeforeEach`, `JustBeforeEach`, `AfterEach`, `Measure`) is nested within another runnable node. This is always a mistake. Any test suites that panic because of this change should be fixed.

|

||||

|

||||

Bug Fixes:

|

||||

|

||||

- `ginkgo boostrap` and `ginkgo generate` no longer fail when dealing with `hyphen-separated-packages`.

|

||||

- parallel specs are now better distributed across nodes - fixed a crashing bug where (for example) distributing 11 tests across 7 nodes would panic

|

||||

|

||||

## 1.0.0 (5/24/2014)

|

||||

New Features:

|

||||

|

||||

- Add `GinkgoParallelNode()` - shorthand for `config.GinkgoConfig.ParallelNode`

|

||||

|

||||

Improvements:

|

||||

|

||||

- When compilation fails, the compilation output is rewritten to present a correct *relative* path. Allows ⌘-clicking in iTerm open the file in your text editor.

|

||||

- `--untilItFails` and `ginkgo watch` now generate new random seeds between test runs, unless a particular random seed is specified.

|

||||

|

||||

Bug Fixes:

|

||||

|

||||

- `-cover` now generates a correctly combined coverprofile when running with in parallel with multiple `-node`s.

|

||||

- Print out the contents of the `GinkgoWriter` when `BeforeSuite` or `AfterSuite` fail.

|

||||

- Fix all remaining race conditions in Ginkgo's test suite.

|

||||

|

||||

## 1.0.0-beta (4/14/2014)

|

||||

Breaking changes:

|

||||

|

||||

- `thirdparty/gomocktestreporter` is gone. Use `GinkgoT()` instead

|

||||

- Modified the Reporter interface

|

||||

- `watch` is now a subcommand, not a flag.

|

||||

|

||||

DSL changes:

|

||||

|

||||

- `BeforeSuite` and `AfterSuite` for setting up and tearing down test suites.

|

||||

- `AfterSuite` is triggered on interrupt (`^C`) as well as exit.

|

||||

- `SynchronizedBeforeSuite` and `SynchronizedAfterSuite` for setting up and tearing down singleton resources across parallel nodes.

|

||||

|

||||

CLI changes:

|

||||

|

||||

- `watch` is now a subcommand, not a flag

|

||||

- `--nodot` flag can be passed to `ginkgo generate` and `ginkgo bootstrap` to avoid dot imports. This explicitly imports all exported identifiers in Ginkgo and Gomega. Refreshing this list can be done by running `ginkgo nodot`

|

||||

- Additional arguments can be passed to specs. Pass them after the `--` separator

|

||||

- `--skipPackage` flag takes a regexp and ignores any packages with package names passing said regexp.

|

||||

- `--trace` flag prints out full stack traces when errors occur, not just the line at which the error occurs.

|

||||

|

||||

Misc:

|

||||

|

||||

- Start using semantic versioning

|

||||

- Start maintaining changelog

|

||||

|

||||

Major refactor:

|

||||

|

||||

- Pull out Ginkgo's internal to `internal`

|

||||

- Rename `example` everywhere to `spec`

|

||||

- Much more!

|

||||

12

vendor/github.com/onsi/ginkgo/CONTRIBUTING.md

generated

vendored

Normal file

12

vendor/github.com/onsi/ginkgo/CONTRIBUTING.md

generated

vendored

Normal file

|

|

@ -0,0 +1,12 @@

|

|||

# Contributing to Ginkgo

|

||||

|

||||

Your contributions to Ginkgo are essential for its long-term maintenance and improvement. To make a contribution:

|

||||

|

||||

- Please **open an issue first** - describe what problem you are trying to solve and give the community a forum for input and feedback ahead of investing time in writing code!

|

||||

- Ensure adequate test coverage:

|

||||

- If you're adding functionality to the Ginkgo library, make sure to add appropriate unit and/or integration tests (under the `integration` folder).

|

||||

- If you're adding functionality to the Ginkgo CLI note that there are very few unit tests. Please add an integration test.

|

||||

- Please run all tests locally (`ginkgo -r -p`) and make sure they go green before submitting the PR

|

||||

- Update the documentation. In addition to standard `godoc` comments Ginkgo has extensive documentation on the `gh-pages` branch. If relevant, please submit a docs PR to that branch alongside your code PR.

|

||||

|

||||

Thanks for supporting Ginkgo!

|

||||

20

vendor/github.com/onsi/ginkgo/LICENSE

generated

vendored

Normal file

20

vendor/github.com/onsi/ginkgo/LICENSE

generated

vendored

Normal file

|

|

@ -0,0 +1,20 @@

|

|||

Copyright (c) 2013-2014 Onsi Fakhouri

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining

|

||||

a copy of this software and associated documentation files (the

|

||||

"Software"), to deal in the Software without restriction, including

|

||||

without limitation the rights to use, copy, modify, merge, publish,

|

||||

distribute, sublicense, and/or sell copies of the Software, and to

|

||||

permit persons to whom the Software is furnished to do so, subject to

|

||||

the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be

|

||||

included in all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

|

||||

EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF

|

||||

MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND

|

||||

NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE

|

||||

LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION

|

||||

OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION

|

||||

WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

||||

117

vendor/github.com/onsi/ginkgo/README.md

generated

vendored

Normal file

117

vendor/github.com/onsi/ginkgo/README.md

generated

vendored

Normal file

|

|

@ -0,0 +1,117 @@

|

|||

|

||||

|

||||

[](https://travis-ci.org/onsi/ginkgo)

|

||||

|

||||

Jump to the [docs](http://onsi.github.io/ginkgo/) to learn more. To start rolling your Ginkgo tests *now* [keep reading](#set-me-up)!

|

||||

|

||||

If you have a question, comment, bug report, feature request, etc. please open a GitHub issue.

|

||||

|

||||

## Feature List

|

||||

|

||||

- Ginkgo uses Go's `testing` package and can live alongside your existing `testing` tests. It's easy to [bootstrap](http://onsi.github.io/ginkgo/#bootstrapping-a-suite) and start writing your [first tests](http://onsi.github.io/ginkgo/#adding-specs-to-a-suite)

|

||||

|

||||

- Structure your BDD-style tests expressively:

|

||||

- Nestable [`Describe` and `Context` container blocks](http://onsi.github.io/ginkgo/#organizing-specs-with-containers-describe-and-context)

|

||||

- [`BeforeEach` and `AfterEach` blocks](http://onsi.github.io/ginkgo/#extracting-common-setup-beforeeach) for setup and teardown

|

||||

- [`It` blocks](http://onsi.github.io/ginkgo/#individual-specs-) that hold your assertions

|

||||

- [`JustBeforeEach` blocks](http://onsi.github.io/ginkgo/#separating-creation-and-configuration-justbeforeeach) that separate creation from configuration (also known as the subject action pattern).

|

||||

- [`BeforeSuite` and `AfterSuite` blocks](http://onsi.github.io/ginkgo/#global-setup-and-teardown-beforesuite-and-aftersuite) to prep for and cleanup after a suite.

|

||||

|

||||

- A comprehensive test runner that lets you:

|

||||

- Mark specs as [pending](http://onsi.github.io/ginkgo/#pending-specs)

|

||||

- [Focus](http://onsi.github.io/ginkgo/#focused-specs) individual specs, and groups of specs, either programmatically or on the command line

|

||||

- Run your tests in [random order](http://onsi.github.io/ginkgo/#spec-permutation), and then reuse random seeds to replicate the same order.

|

||||

- Break up your test suite into parallel processes for straightforward [test parallelization](http://onsi.github.io/ginkgo/#parallel-specs)

|

||||

|

||||

- `ginkgo`: a command line interface with plenty of handy command line arguments for [running your tests](http://onsi.github.io/ginkgo/#running-tests) and [generating](http://onsi.github.io/ginkgo/#generators) test files. Here are a few choice examples:

|

||||

- `ginkgo -nodes=N` runs your tests in `N` parallel processes and print out coherent output in realtime

|

||||

- `ginkgo -cover` runs your tests using Golang's code coverage tool

|

||||

- `ginkgo convert` converts an XUnit-style `testing` package to a Ginkgo-style package

|

||||

- `ginkgo -focus="REGEXP"` and `ginkgo -skip="REGEXP"` allow you to specify a subset of tests to run via regular expression

|

||||

- `ginkgo -r` runs all tests suites under the current directory

|

||||

- `ginkgo -v` prints out identifying information for each tests just before it runs

|

||||

|

||||

And much more: run `ginkgo help` for details!

|

||||

|

||||

The `ginkgo` CLI is convenient, but purely optional -- Ginkgo works just fine with `go test`

|

||||

|

||||

- `ginkgo watch` [watches](https://onsi.github.io/ginkgo/#watching-for-changes) packages *and their dependencies* for changes, then reruns tests. Run tests immediately as you develop!

|

||||

|

||||

- Built-in support for testing [asynchronicity](http://onsi.github.io/ginkgo/#asynchronous-tests)

|

||||

|

||||

- Built-in support for [benchmarking](http://onsi.github.io/ginkgo/#benchmark-tests) your code. Control the number of benchmark samples as you gather runtimes and other, arbitrary, bits of numerical information about your code.

|

||||

|

||||

- [Completions for Sublime Text](https://github.com/onsi/ginkgo-sublime-completions): just use [Package Control](https://sublime.wbond.net/) to install `Ginkgo Completions`.

|

||||

|

||||

- [Completions for VSCode](https://github.com/onsi/vscode-ginkgo): just use VSCode's extension installer to install `vscode-ginkgo`.

|

||||

|

||||

- Straightforward support for third-party testing libraries such as [Gomock](https://code.google.com/p/gomock/) and [Testify](https://github.com/stretchr/testify). Check out the [docs](http://onsi.github.io/ginkgo/#third-party-integrations) for details.

|

||||

|

||||

- A modular architecture that lets you easily:

|

||||

- Write [custom reporters](http://onsi.github.io/ginkgo/#writing-custom-reporters) (for example, Ginkgo comes with a [JUnit XML reporter](http://onsi.github.io/ginkgo/#generating-junit-xml-output) and a TeamCity reporter).

|

||||

- [Adapt an existing matcher library (or write your own!)](http://onsi.github.io/ginkgo/#using-other-matcher-libraries) to work with Ginkgo

|

||||

|

||||

## [Gomega](http://github.com/onsi/gomega): Ginkgo's Preferred Matcher Library

|

||||

|

||||

Ginkgo is best paired with Gomega. Learn more about Gomega [here](http://onsi.github.io/gomega/)

|

||||

|

||||

## [Agouti](http://github.com/sclevine/agouti): A Golang Acceptance Testing Framework

|

||||

|

||||

Agouti allows you run WebDriver integration tests. Learn more about Agouti [here](http://agouti.org)

|

||||

|

||||

## Set Me Up!

|

||||

|

||||

You'll need Golang v1.3+ (Ubuntu users: you probably have Golang v1.0 -- you'll need to upgrade!)

|

||||

|

||||

```bash

|

||||

|

||||

go get github.com/onsi/ginkgo/ginkgo # installs the ginkgo CLI

|

||||

go get github.com/onsi/gomega # fetches the matcher library

|

||||

|

||||

cd path/to/package/you/want/to/test

|

||||

|

||||

ginkgo bootstrap # set up a new ginkgo suite

|

||||

ginkgo generate # will create a sample test file. edit this file and add your tests then...

|

||||

|

||||

go test # to run your tests

|

||||

|

||||

ginkgo # also runs your tests

|

||||

|

||||

```

|

||||

|

||||

## I'm new to Go: What are my testing options?

|

||||

|

||||

Of course, I heartily recommend [Ginkgo](https://github.com/onsi/ginkgo) and [Gomega](https://github.com/onsi/gomega). Both packages are seeing heavy, daily, production use on a number of projects and boast a mature and comprehensive feature-set.

|

||||

|

||||

With that said, it's great to know what your options are :)

|

||||

|

||||

### What Golang gives you out of the box

|

||||

|

||||

Testing is a first class citizen in Golang, however Go's built-in testing primitives are somewhat limited: The [testing](http://golang.org/pkg/testing) package provides basic XUnit style tests and no assertion library.

|

||||

|

||||

### Matcher libraries for Golang's XUnit style tests

|

||||

|

||||

A number of matcher libraries have been written to augment Go's built-in XUnit style tests. Here are two that have gained traction:

|

||||

|

||||

- [testify](https://github.com/stretchr/testify)

|

||||

- [gocheck](http://labix.org/gocheck)

|

||||

|

||||

You can also use Ginkgo's matcher library [Gomega](https://github.com/onsi/gomega) in [XUnit style tests](http://onsi.github.io/gomega/#using-gomega-with-golangs-xunitstyle-tests)

|

||||

|

||||

### BDD style testing frameworks

|

||||

|

||||

There are a handful of BDD-style testing frameworks written for Golang. Here are a few:

|

||||

|

||||

- [Ginkgo](https://github.com/onsi/ginkgo) ;)

|

||||

- [GoConvey](https://github.com/smartystreets/goconvey)

|

||||

- [Goblin](https://github.com/franela/goblin)

|

||||

- [Mao](https://github.com/azer/mao)

|

||||

- [Zen](https://github.com/pranavraja/zen)

|

||||

|

||||

Finally, @shageman has [put together](https://github.com/shageman/gotestit) a comprehensive comparison of golang testing libraries.

|

||||

|

||||

Go explore!

|

||||

|

||||

## License

|

||||

|

||||

Ginkgo is MIT-Licensed

|

||||

187

vendor/github.com/onsi/ginkgo/config/config.go

generated

vendored

Normal file

187

vendor/github.com/onsi/ginkgo/config/config.go

generated

vendored

Normal file

|

|

@ -0,0 +1,187 @@

|

|||

/*

|

||||

Ginkgo accepts a number of configuration options.

|

||||

|

||||

These are documented [here](http://onsi.github.io/ginkgo/#the_ginkgo_cli)

|

||||

|

||||

You can also learn more via

|

||||

|

||||

ginkgo help

|

||||

|

||||

or (I kid you not):

|

||||

|

||||

go test -asdf

|

||||

*/

|

||||

package config

|

||||

|

||||

import (

|

||||

"flag"

|

||||

"time"

|

||||

|

||||

"fmt"

|

||||

)

|

||||

|

||||

const VERSION = "1.4.0"

|

||||

|

||||

type GinkgoConfigType struct {

|

||||

RandomSeed int64

|

||||

RandomizeAllSpecs bool

|

||||

RegexScansFilePath bool

|

||||

FocusString string

|

||||

SkipString string

|

||||

SkipMeasurements bool

|

||||

FailOnPending bool

|

||||

FailFast bool

|

||||

FlakeAttempts int

|

||||

EmitSpecProgress bool

|

||||

DryRun bool

|

||||

|

||||

ParallelNode int

|

||||

ParallelTotal int

|

||||

SyncHost string

|

||||

StreamHost string

|

||||

}

|

||||

|

||||

var GinkgoConfig = GinkgoConfigType{}

|

||||

|

||||

type DefaultReporterConfigType struct {

|

||||

NoColor bool

|

||||

SlowSpecThreshold float64

|

||||

NoisyPendings bool

|

||||

Succinct bool

|

||||

Verbose bool

|

||||

FullTrace bool

|

||||

}

|

||||

|

||||

var DefaultReporterConfig = DefaultReporterConfigType{}

|

||||

|

||||

func processPrefix(prefix string) string {

|

||||

if prefix != "" {

|

||||

prefix = prefix + "."

|

||||

}

|

||||

return prefix

|

||||

}

|

||||

|

||||

func Flags(flagSet *flag.FlagSet, prefix string, includeParallelFlags bool) {

|

||||

prefix = processPrefix(prefix)

|

||||

flagSet.Int64Var(&(GinkgoConfig.RandomSeed), prefix+"seed", time.Now().Unix(), "The seed used to randomize the spec suite.")

|

||||

flagSet.BoolVar(&(GinkgoConfig.RandomizeAllSpecs), prefix+"randomizeAllSpecs", false, "If set, ginkgo will randomize all specs together. By default, ginkgo only randomizes the top level Describe/Context groups.")

|

||||

flagSet.BoolVar(&(GinkgoConfig.SkipMeasurements), prefix+"skipMeasurements", false, "If set, ginkgo will skip any measurement specs.")

|

||||

flagSet.BoolVar(&(GinkgoConfig.FailOnPending), prefix+"failOnPending", false, "If set, ginkgo will mark the test suite as failed if any specs are pending.")

|

||||

flagSet.BoolVar(&(GinkgoConfig.FailFast), prefix+"failFast", false, "If set, ginkgo will stop running a test suite after a failure occurs.")

|

||||

|

||||

flagSet.BoolVar(&(GinkgoConfig.DryRun), prefix+"dryRun", false, "If set, ginkgo will walk the test hierarchy without actually running anything. Best paired with -v.")

|

||||

|

||||

flagSet.StringVar(&(GinkgoConfig.FocusString), prefix+"focus", "", "If set, ginkgo will only run specs that match this regular expression.")

|

||||

flagSet.StringVar(&(GinkgoConfig.SkipString), prefix+"skip", "", "If set, ginkgo will only run specs that do not match this regular expression.")

|

||||

|

||||

flagSet.BoolVar(&(GinkgoConfig.RegexScansFilePath), prefix+"regexScansFilePath", false, "If set, ginkgo regex matching also will look at the file path (code location).")

|

||||

|

||||

flagSet.IntVar(&(GinkgoConfig.FlakeAttempts), prefix+"flakeAttempts", 1, "Make up to this many attempts to run each spec. Please note that if any of the attempts succeed, the suite will not be failed. But any failures will still be recorded.")

|

||||

|

||||

flagSet.BoolVar(&(GinkgoConfig.EmitSpecProgress), prefix+"progress", false, "If set, ginkgo will emit progress information as each spec runs to the GinkgoWriter.")

|

||||

|

||||

if includeParallelFlags {

|

||||

flagSet.IntVar(&(GinkgoConfig.ParallelNode), prefix+"parallel.node", 1, "This worker node's (one-indexed) node number. For running specs in parallel.")

|

||||

flagSet.IntVar(&(GinkgoConfig.ParallelTotal), prefix+"parallel.total", 1, "The total number of worker nodes. For running specs in parallel.")

|

||||

flagSet.StringVar(&(GinkgoConfig.SyncHost), prefix+"parallel.synchost", "", "The address for the server that will synchronize the running nodes.")

|

||||

flagSet.StringVar(&(GinkgoConfig.StreamHost), prefix+"parallel.streamhost", "", "The address for the server that the running nodes should stream data to.")

|

||||

}

|

||||

|

||||

flagSet.BoolVar(&(DefaultReporterConfig.NoColor), prefix+"noColor", false, "If set, suppress color output in default reporter.")

|

||||

flagSet.Float64Var(&(DefaultReporterConfig.SlowSpecThreshold), prefix+"slowSpecThreshold", 5.0, "(in seconds) Specs that take longer to run than this threshold are flagged as slow by the default reporter.")

|

||||

flagSet.BoolVar(&(DefaultReporterConfig.NoisyPendings), prefix+"noisyPendings", true, "If set, default reporter will shout about pending tests.")

|

||||

flagSet.BoolVar(&(DefaultReporterConfig.Verbose), prefix+"v", false, "If set, default reporter print out all specs as they begin.")

|

||||

flagSet.BoolVar(&(DefaultReporterConfig.Succinct), prefix+"succinct", false, "If set, default reporter prints out a very succinct report")

|

||||

flagSet.BoolVar(&(DefaultReporterConfig.FullTrace), prefix+"trace", false, "If set, default reporter prints out the full stack trace when a failure occurs")

|

||||

}

|

||||

|

||||

func BuildFlagArgs(prefix string, ginkgo GinkgoConfigType, reporter DefaultReporterConfigType) []string {

|

||||

prefix = processPrefix(prefix)

|

||||

result := make([]string, 0)

|

||||

|

||||

if ginkgo.RandomSeed > 0 {

|

||||

result = append(result, fmt.Sprintf("--%sseed=%d", prefix, ginkgo.RandomSeed))

|

||||

}

|

||||

|

||||

if ginkgo.RandomizeAllSpecs {

|

||||

result = append(result, fmt.Sprintf("--%srandomizeAllSpecs", prefix))

|

||||

}

|

||||

|

||||

if ginkgo.SkipMeasurements {

|

||||

result = append(result, fmt.Sprintf("--%sskipMeasurements", prefix))

|

||||

}

|

||||

|

||||

if ginkgo.FailOnPending {

|

||||

result = append(result, fmt.Sprintf("--%sfailOnPending", prefix))

|

||||

}

|

||||

|

||||

if ginkgo.FailFast {

|

||||

result = append(result, fmt.Sprintf("--%sfailFast", prefix))

|

||||

}

|

||||

|

||||

if ginkgo.DryRun {

|

||||

result = append(result, fmt.Sprintf("--%sdryRun", prefix))

|

||||

}

|

||||

|

||||

if ginkgo.FocusString != "" {

|

||||

result = append(result, fmt.Sprintf("--%sfocus=%s", prefix, ginkgo.FocusString))

|

||||

}

|

||||

|

||||

if ginkgo.SkipString != "" {

|

||||

result = append(result, fmt.Sprintf("--%sskip=%s", prefix, ginkgo.SkipString))

|

||||

}

|

||||

|

||||

if ginkgo.FlakeAttempts > 1 {

|

||||

result = append(result, fmt.Sprintf("--%sflakeAttempts=%d", prefix, ginkgo.FlakeAttempts))

|

||||

}

|

||||

|

||||

if ginkgo.EmitSpecProgress {

|

||||

result = append(result, fmt.Sprintf("--%sprogress", prefix))

|

||||

}

|

||||

|

||||

if ginkgo.ParallelNode != 0 {

|

||||

result = append(result, fmt.Sprintf("--%sparallel.node=%d", prefix, ginkgo.ParallelNode))

|

||||

}

|

||||

|

||||

if ginkgo.ParallelTotal != 0 {

|

||||

result = append(result, fmt.Sprintf("--%sparallel.total=%d", prefix, ginkgo.ParallelTotal))

|

||||

}

|

||||

|

||||

if ginkgo.StreamHost != "" {

|

||||

result = append(result, fmt.Sprintf("--%sparallel.streamhost=%s", prefix, ginkgo.StreamHost))

|

||||

}

|

||||

|

||||

if ginkgo.SyncHost != "" {

|

||||

result = append(result, fmt.Sprintf("--%sparallel.synchost=%s", prefix, ginkgo.SyncHost))

|

||||

}

|

||||

|

||||

if ginkgo.RegexScansFilePath {

|

||||

result = append(result, fmt.Sprintf("--%sregexScansFilePath", prefix))

|

||||

}

|

||||

|

||||

if reporter.NoColor {

|

||||

result = append(result, fmt.Sprintf("--%snoColor", prefix))

|

||||

}

|

||||

|

||||

if reporter.SlowSpecThreshold > 0 {

|

||||

result = append(result, fmt.Sprintf("--%sslowSpecThreshold=%.5f", prefix, reporter.SlowSpecThreshold))

|

||||

}

|

||||

|

||||

if !reporter.NoisyPendings {

|

||||

result = append(result, fmt.Sprintf("--%snoisyPendings=false", prefix))

|

||||

}

|

||||

|

||||

if reporter.Verbose {

|

||||

result = append(result, fmt.Sprintf("--%sv", prefix))

|

||||

}

|

||||

|

||||

if reporter.Succinct {

|

||||

result = append(result, fmt.Sprintf("--%ssuccinct", prefix))

|

||||

}

|

||||

|

||||

if reporter.FullTrace {

|

||||

result = append(result, fmt.Sprintf("--%strace", prefix))

|

||||

}

|

||||

|

||||

return result

|

||||

}

|

||||

569

vendor/github.com/onsi/ginkgo/ginkgo_dsl.go

generated

vendored

Normal file

569

vendor/github.com/onsi/ginkgo/ginkgo_dsl.go

generated

vendored

Normal file

|

|

@ -0,0 +1,569 @@

|

|||

/*

|

||||

Ginkgo is a BDD-style testing framework for Golang

|

||||

|

||||

The godoc documentation describes Ginkgo's API. More comprehensive documentation (with examples!) is available at http://onsi.github.io/ginkgo/

|

||||

|

||||

Ginkgo's preferred matcher library is [Gomega](http://github.com/onsi/gomega)

|

||||

|

||||

Ginkgo on Github: http://github.com/onsi/ginkgo

|

||||

|

||||

Ginkgo is MIT-Licensed

|

||||

*/

|

||||

package ginkgo

|

||||

|

||||

import (

|

||||

"flag"

|

||||

"fmt"

|

||||

"io"

|

||||

"net/http"

|

||||

"os"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/onsi/ginkgo/config"

|

||||

"github.com/onsi/ginkgo/internal/codelocation"

|

||||

"github.com/onsi/ginkgo/internal/failer"

|

||||

"github.com/onsi/ginkgo/internal/remote"

|

||||

"github.com/onsi/ginkgo/internal/suite"

|

||||

"github.com/onsi/ginkgo/internal/testingtproxy"

|

||||

"github.com/onsi/ginkgo/internal/writer"

|

||||

"github.com/onsi/ginkgo/reporters"

|

||||

"github.com/onsi/ginkgo/reporters/stenographer"

|

||||

"github.com/onsi/ginkgo/types"

|

||||

)

|

||||

|

||||

const GINKGO_VERSION = config.VERSION

|

||||

const GINKGO_PANIC = `

|

||||

Your test failed.

|

||||

Ginkgo panics to prevent subsequent assertions from running.

|

||||

Normally Ginkgo rescues this panic so you shouldn't see it.

|

||||

|

||||

But, if you make an assertion in a goroutine, Ginkgo can't capture the panic.

|

||||

To circumvent this, you should call

|

||||

|

||||

defer GinkgoRecover()

|

||||

|

||||

at the top of the goroutine that caused this panic.

|

||||

`

|

||||

const defaultTimeout = 1

|

||||

|

||||

var globalSuite *suite.Suite

|

||||

var globalFailer *failer.Failer

|

||||

|

||||

func init() {

|

||||

config.Flags(flag.CommandLine, "ginkgo", true)

|

||||

GinkgoWriter = writer.New(os.Stdout)

|

||||

globalFailer = failer.New()

|

||||

globalSuite = suite.New(globalFailer)

|

||||

}

|

||||

|

||||

//GinkgoWriter implements an io.Writer

|

||||

//When running in verbose mode any writes to GinkgoWriter will be immediately printed

|

||||

//to stdout. Otherwise, GinkgoWriter will buffer any writes produced during the current test and flush them to screen

|

||||

//only if the current test fails.

|

||||

var GinkgoWriter io.Writer

|

||||

|

||||

//The interface by which Ginkgo receives *testing.T

|

||||

type GinkgoTestingT interface {

|

||||

Fail()

|

||||

}

|

||||

|

||||

//GinkgoRandomSeed returns the seed used to randomize spec execution order. It is

|

||||

//useful for seeding your own pseudorandom number generators (PRNGs) to ensure

|

||||

//consistent executions from run to run, where your tests contain variability (for

|

||||

//example, when selecting random test data).

|

||||

func GinkgoRandomSeed() int64 {

|

||||

return config.GinkgoConfig.RandomSeed

|

||||

}

|

||||

|

||||

//GinkgoParallelNode returns the parallel node number for the current ginkgo process

|

||||

//The node number is 1-indexed

|

||||

func GinkgoParallelNode() int {

|

||||

return config.GinkgoConfig.ParallelNode

|

||||

}

|

||||

|

||||

//Some matcher libraries or legacy codebases require a *testing.T

|

||||

//GinkgoT implements an interface analogous to *testing.T and can be used if

|

||||

//the library in question accepts *testing.T through an interface

|

||||

//

|

||||

// For example, with testify:

|

||||

// assert.Equal(GinkgoT(), 123, 123, "they should be equal")

|

||||

//

|

||||

// Or with gomock:

|

||||

// gomock.NewController(GinkgoT())

|

||||

//

|

||||

// GinkgoT() takes an optional offset argument that can be used to get the

|

||||

// correct line number associated with the failure.

|

||||

func GinkgoT(optionalOffset ...int) GinkgoTInterface {

|

||||

offset := 3

|

||||

if len(optionalOffset) > 0 {

|

||||

offset = optionalOffset[0]

|

||||

}

|

||||

return testingtproxy.New(GinkgoWriter, Fail, offset)

|

||||

}

|

||||

|

||||

//The interface returned by GinkgoT(). This covers most of the methods

|

||||

//in the testing package's T.

|

||||

type GinkgoTInterface interface {

|

||||

Fail()

|

||||

Error(args ...interface{})

|

||||

Errorf(format string, args ...interface{})

|

||||

FailNow()

|

||||

Fatal(args ...interface{})

|

||||

Fatalf(format string, args ...interface{})

|

||||

Log(args ...interface{})

|

||||

Logf(format string, args ...interface{})

|

||||

Failed() bool

|

||||

Parallel()

|

||||

Skip(args ...interface{})

|

||||

Skipf(format string, args ...interface{})

|

||||

SkipNow()

|

||||

Skipped() bool

|

||||

}

|

||||

|

||||

//Custom Ginkgo test reporters must implement the Reporter interface.

|

||||

//

|

||||

//The custom reporter is passed in a SuiteSummary when the suite begins and ends,

|

||||

//and a SpecSummary just before a spec begins and just after a spec ends

|

||||

type Reporter reporters.Reporter

|

||||

|

||||

//Asynchronous specs are given a channel of the Done type. You must close or write to the channel

|

||||

//to tell Ginkgo that your async test is done.

|

||||

type Done chan<- interface{}

|

||||

|

||||

//GinkgoTestDescription represents the information about the current running test returned by CurrentGinkgoTestDescription

|

||||

// FullTestText: a concatenation of ComponentTexts and the TestText

|

||||

// ComponentTexts: a list of all texts for the Describes & Contexts leading up to the current test

|

||||

// TestText: the text in the actual It or Measure node

|

||||

// IsMeasurement: true if the current test is a measurement

|

||||

// FileName: the name of the file containing the current test

|

||||

// LineNumber: the line number for the current test

|

||||

// Failed: if the current test has failed, this will be true (useful in an AfterEach)

|

||||

type GinkgoTestDescription struct {

|

||||

FullTestText string

|

||||

ComponentTexts []string

|

||||

TestText string

|

||||

|

||||

IsMeasurement bool

|

||||

|

||||

FileName string

|

||||

LineNumber int

|

||||

|

||||

Failed bool

|

||||

}

|

||||

|

||||

//CurrentGinkgoTestDescripton returns information about the current running test.

|

||||

func CurrentGinkgoTestDescription() GinkgoTestDescription {

|

||||

summary, ok := globalSuite.CurrentRunningSpecSummary()

|

||||

if !ok {

|

||||

return GinkgoTestDescription{}

|

||||

}

|

||||

|

||||

subjectCodeLocation := summary.ComponentCodeLocations[len(summary.ComponentCodeLocations)-1]

|

||||

|

||||

return GinkgoTestDescription{

|

||||

ComponentTexts: summary.ComponentTexts[1:],

|

||||

FullTestText: strings.Join(summary.ComponentTexts[1:], " "),

|

||||

TestText: summary.ComponentTexts[len(summary.ComponentTexts)-1],

|

||||

IsMeasurement: summary.IsMeasurement,

|

||||

FileName: subjectCodeLocation.FileName,

|

||||

LineNumber: subjectCodeLocation.LineNumber,

|

||||

Failed: summary.HasFailureState(),

|

||||

}

|

||||

}

|

||||

|

||||

//Measurement tests receive a Benchmarker.

|

||||

//

|

||||

//You use the Time() function to time how long the passed in body function takes to run

|

||||

//You use the RecordValue() function to track arbitrary numerical measurements.

|

||||

//The RecordValueWithPrecision() function can be used alternatively to provide the unit

|

||||

//and resolution of the numeric measurement.

|

||||

//The optional info argument is passed to the test reporter and can be used to

|

||||

// provide the measurement data to a custom reporter with context.

|

||||

//

|

||||

//See http://onsi.github.io/ginkgo/#benchmark_tests for more details

|

||||

type Benchmarker interface {

|

||||

Time(name string, body func(), info ...interface{}) (elapsedTime time.Duration)

|

||||

RecordValue(name string, value float64, info ...interface{})

|

||||

RecordValueWithPrecision(name string, value float64, units string, precision int, info ...interface{})

|

||||

}

|

||||

|

||||

//RunSpecs is the entry point for the Ginkgo test runner.

|

||||

//You must call this within a Golang testing TestX(t *testing.T) function.

|

||||

//

|

||||

//To bootstrap a test suite you can use the Ginkgo CLI:

|

||||

//

|

||||

// ginkgo bootstrap

|

||||

func RunSpecs(t GinkgoTestingT, description string) bool {

|

||||

specReporters := []Reporter{buildDefaultReporter()}

|

||||

return RunSpecsWithCustomReporters(t, description, specReporters)

|

||||

}

|

||||

|

||||

//To run your tests with Ginkgo's default reporter and your custom reporter(s), replace

|

||||

//RunSpecs() with this method.

|

||||

func RunSpecsWithDefaultAndCustomReporters(t GinkgoTestingT, description string, specReporters []Reporter) bool {

|

||||

specReporters = append([]Reporter{buildDefaultReporter()}, specReporters...)

|

||||

return RunSpecsWithCustomReporters(t, description, specReporters)

|

||||

}

|

||||

|

||||

//To run your tests with your custom reporter(s) (and *not* Ginkgo's default reporter), replace

|

||||

//RunSpecs() with this method. Note that parallel tests will not work correctly without the default reporter

|

||||

func RunSpecsWithCustomReporters(t GinkgoTestingT, description string, specReporters []Reporter) bool {

|

||||

writer := GinkgoWriter.(*writer.Writer)

|

||||

writer.SetStream(config.DefaultReporterConfig.Verbose)

|

||||

reporters := make([]reporters.Reporter, len(specReporters))

|

||||

for i, reporter := range specReporters {

|

||||

reporters[i] = reporter

|

||||

}

|

||||

passed, hasFocusedTests := globalSuite.Run(t, description, reporters, writer, config.GinkgoConfig)

|

||||

if passed && hasFocusedTests {

|

||||

fmt.Println("PASS | FOCUSED")

|

||||

os.Exit(types.GINKGO_FOCUS_EXIT_CODE)

|

||||

}

|

||||

return passed

|

||||

}

|

||||

|

||||

func buildDefaultReporter() Reporter {

|

||||

remoteReportingServer := config.GinkgoConfig.StreamHost

|

||||

if remoteReportingServer == "" {

|

||||

stenographer := stenographer.New(!config.DefaultReporterConfig.NoColor, config.GinkgoConfig.FlakeAttempts > 1)

|

||||

return reporters.NewDefaultReporter(config.DefaultReporterConfig, stenographer)

|

||||

} else {

|

||||

return remote.NewForwardingReporter(remoteReportingServer, &http.Client{}, remote.NewOutputInterceptor())

|

||||

}

|

||||

}

|

||||

|

||||

//Skip notifies Ginkgo that the current spec should be skipped.

|

||||

func Skip(message string, callerSkip ...int) {

|

||||

skip := 0

|

||||

if len(callerSkip) > 0 {

|

||||

skip = callerSkip[0]

|

||||

}

|

||||

|

||||

globalFailer.Skip(message, codelocation.New(skip+1))

|

||||

panic(GINKGO_PANIC)

|

||||

}

|

||||

|

||||

//Fail notifies Ginkgo that the current spec has failed. (Gomega will call Fail for you automatically when an assertion fails.)

|

||||

func Fail(message string, callerSkip ...int) {

|

||||

skip := 0

|

||||

if len(callerSkip) > 0 {

|

||||

skip = callerSkip[0]

|

||||

}

|

||||

|

||||

globalFailer.Fail(message, codelocation.New(skip+1))

|

||||

panic(GINKGO_PANIC)

|

||||

}

|

||||

|

||||

//GinkgoRecover should be deferred at the top of any spawned goroutine that (may) call `Fail`

|

||||

//Since Gomega assertions call fail, you should throw a `defer GinkgoRecover()` at the top of any goroutine that

|

||||

//calls out to Gomega

|

||||

//

|

||||

//Here's why: Ginkgo's `Fail` method records the failure and then panics to prevent

|

||||

//further assertions from running. This panic must be recovered. Ginkgo does this for you

|

||||

//if the panic originates in a Ginkgo node (an It, BeforeEach, etc...)

|

||||

//

|

||||

//Unfortunately, if a panic originates on a goroutine *launched* from one of these nodes there's no

|

||||

//way for Ginkgo to rescue the panic. To do this, you must remember to `defer GinkgoRecover()` at the top of such a goroutine.

|

||||

func GinkgoRecover() {

|

||||

e := recover()

|

||||

if e != nil {

|

||||

globalFailer.Panic(codelocation.New(1), e)

|

||||

}

|

||||

}

|

||||

|

||||

//Describe blocks allow you to organize your specs. A Describe block can contain any number of

|

||||

//BeforeEach, AfterEach, JustBeforeEach, It, and Measurement blocks.

|

||||

//

|

||||

//In addition you can nest Describe and Context blocks. Describe and Context blocks are functionally

|

||||

//equivalent. The difference is purely semantic -- you typical Describe the behavior of an object

|

||||

//or method and, within that Describe, outline a number of Contexts.

|

||||

func Describe(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypeNone, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can focus the tests within a describe block using FDescribe

|

||||

func FDescribe(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypeFocused, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark the tests within a describe block as pending using PDescribe

|

||||

func PDescribe(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypePending, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark the tests within a describe block as pending using XDescribe

|

||||

func XDescribe(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypePending, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//Context blocks allow you to organize your specs. A Context block can contain any number of

|

||||

//BeforeEach, AfterEach, JustBeforeEach, It, and Measurement blocks.

|

||||

//

|

||||

//In addition you can nest Describe and Context blocks. Describe and Context blocks are functionally

|

||||

//equivalent. The difference is purely semantic -- you typical Describe the behavior of an object

|

||||

//or method and, within that Describe, outline a number of Contexts.

|

||||

func Context(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypeNone, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can focus the tests within a describe block using FContext

|

||||

func FContext(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypeFocused, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark the tests within a describe block as pending using PContext

|

||||

func PContext(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypePending, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark the tests within a describe block as pending using XContext

|

||||

func XContext(text string, body func()) bool {

|

||||

globalSuite.PushContainerNode(text, body, types.FlagTypePending, codelocation.New(1))

|

||||

return true

|

||||

}

|

||||

|

||||

//It blocks contain your test code and assertions. You cannot nest any other Ginkgo blocks

|

||||

//within an It block.

|

||||

//

|

||||

//Ginkgo will normally run It blocks synchronously. To perform asynchronous tests, pass a

|

||||

//function that accepts a Done channel. When you do this, you can also provide an optional timeout.

|

||||

func It(text string, body interface{}, timeout ...float64) bool {

|

||||

globalSuite.PushItNode(text, body, types.FlagTypeNone, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can focus individual Its using FIt

|

||||

func FIt(text string, body interface{}, timeout ...float64) bool {

|

||||

globalSuite.PushItNode(text, body, types.FlagTypeFocused, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark Its as pending using PIt

|

||||

func PIt(text string, _ ...interface{}) bool {

|

||||

globalSuite.PushItNode(text, func() {}, types.FlagTypePending, codelocation.New(1), 0)

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark Its as pending using XIt

|

||||

func XIt(text string, _ ...interface{}) bool {

|

||||

globalSuite.PushItNode(text, func() {}, types.FlagTypePending, codelocation.New(1), 0)

|

||||

return true

|

||||

}

|

||||

|

||||

//Specify blocks are aliases for It blocks and allow for more natural wording in situations

|

||||

//which "It" does not fit into a natural sentence flow. All the same protocols apply for Specify blocks

|

||||

//which apply to It blocks.

|

||||

func Specify(text string, body interface{}, timeout ...float64) bool {

|

||||

return It(text, body, timeout...)

|

||||

}

|

||||

|

||||

//You can focus individual Specifys using FSpecify

|

||||

func FSpecify(text string, body interface{}, timeout ...float64) bool {

|

||||

return FIt(text, body, timeout...)

|

||||

}

|

||||

|

||||

//You can mark Specifys as pending using PSpecify

|

||||

func PSpecify(text string, is ...interface{}) bool {

|

||||

return PIt(text, is...)

|

||||

}

|

||||

|

||||

//You can mark Specifys as pending using XSpecify

|

||||

func XSpecify(text string, is ...interface{}) bool {

|

||||

return XIt(text, is...)

|

||||

}

|

||||

|

||||

//By allows you to better document large Its.

|

||||

//

|

||||

//Generally you should try to keep your Its short and to the point. This is not always possible, however,

|

||||

//especially in the context of integration tests that capture a particular workflow.

|

||||

//

|

||||

//By allows you to document such flows. By must be called within a runnable node (It, BeforeEach, Measure, etc...)

|

||||

//By will simply log the passed in text to the GinkgoWriter. If By is handed a function it will immediately run the function.

|

||||

func By(text string, callbacks ...func()) {

|

||||

preamble := "\x1b[1mSTEP\x1b[0m"

|

||||

if config.DefaultReporterConfig.NoColor {

|

||||

preamble = "STEP"

|

||||

}

|

||||

fmt.Fprintln(GinkgoWriter, preamble+": "+text)

|

||||

if len(callbacks) == 1 {

|

||||

callbacks[0]()

|

||||

}

|

||||

if len(callbacks) > 1 {

|

||||

panic("just one callback per By, please")

|

||||

}

|

||||

}

|

||||

|

||||

//Measure blocks run the passed in body function repeatedly (determined by the samples argument)

|

||||

//and accumulate metrics provided to the Benchmarker by the body function.

|

||||

//

|

||||

//The body function must have the signature:

|

||||

// func(b Benchmarker)

|

||||

func Measure(text string, body interface{}, samples int) bool {

|

||||

globalSuite.PushMeasureNode(text, body, types.FlagTypeNone, codelocation.New(1), samples)

|

||||

return true

|

||||

}

|

||||

|

||||

//You can focus individual Measures using FMeasure

|

||||

func FMeasure(text string, body interface{}, samples int) bool {

|

||||

globalSuite.PushMeasureNode(text, body, types.FlagTypeFocused, codelocation.New(1), samples)

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark Maeasurements as pending using PMeasure

|

||||

func PMeasure(text string, _ ...interface{}) bool {

|

||||

globalSuite.PushMeasureNode(text, func(b Benchmarker) {}, types.FlagTypePending, codelocation.New(1), 0)

|

||||

return true

|

||||

}

|

||||

|

||||

//You can mark Maeasurements as pending using XMeasure

|

||||

func XMeasure(text string, _ ...interface{}) bool {

|

||||

globalSuite.PushMeasureNode(text, func(b Benchmarker) {}, types.FlagTypePending, codelocation.New(1), 0)

|

||||

return true

|

||||

}

|

||||

|

||||

//BeforeSuite blocks are run just once before any specs are run. When running in parallel, each

|

||||

//parallel node process will call BeforeSuite.

|

||||

//

|

||||

//BeforeSuite blocks can be made asynchronous by providing a body function that accepts a Done channel

|

||||

//

|

||||

//You may only register *one* BeforeSuite handler per test suite. You typically do so in your bootstrap file at the top level.

|

||||

func BeforeSuite(body interface{}, timeout ...float64) bool {

|

||||

globalSuite.SetBeforeSuiteNode(body, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

//AfterSuite blocks are *always* run after all the specs regardless of whether specs have passed or failed.

|

||||

//Moreover, if Ginkgo receives an interrupt signal (^C) it will attempt to run the AfterSuite before exiting.

|

||||

//

|

||||

//When running in parallel, each parallel node process will call AfterSuite.

|

||||

//

|

||||

//AfterSuite blocks can be made asynchronous by providing a body function that accepts a Done channel

|

||||

//

|

||||

//You may only register *one* AfterSuite handler per test suite. You typically do so in your bootstrap file at the top level.

|

||||

func AfterSuite(body interface{}, timeout ...float64) bool {

|

||||

globalSuite.SetAfterSuiteNode(body, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

//SynchronizedBeforeSuite blocks are primarily meant to solve the problem of setting up singleton external resources shared across

|

||||

//nodes when running tests in parallel. For example, say you have a shared database that you can only start one instance of that

|

||||

//must be used in your tests. When running in parallel, only one node should set up the database and all other nodes should wait

|

||||

//until that node is done before running.

|

||||

//

|

||||

//SynchronizedBeforeSuite accomplishes this by taking *two* function arguments. The first is only run on parallel node #1. The second is

|

||||

//run on all nodes, but *only* after the first function completes succesfully. Ginkgo also makes it possible to send data from the first function (on Node 1)

|

||||

//to the second function (on all the other nodes).

|

||||

//

|

||||

//The functions have the following signatures. The first function (which only runs on node 1) has the signature:

|

||||

//

|

||||

// func() []byte

|

||||

//

|

||||

//or, to run asynchronously:

|

||||

//

|

||||

// func(done Done) []byte

|

||||

//

|

||||

//The byte array returned by the first function is then passed to the second function, which has the signature:

|

||||

//

|

||||

// func(data []byte)

|

||||

//

|

||||

//or, to run asynchronously:

|

||||

//

|

||||

// func(data []byte, done Done)

|

||||

//

|

||||

//Here's a simple pseudo-code example that starts a shared database on Node 1 and shares the database's address with the other nodes:

|

||||

//

|

||||

// var dbClient db.Client

|

||||

// var dbRunner db.Runner

|

||||

//

|

||||

// var _ = SynchronizedBeforeSuite(func() []byte {

|

||||

// dbRunner = db.NewRunner()

|

||||

// err := dbRunner.Start()

|

||||

// Ω(err).ShouldNot(HaveOccurred())

|

||||

// return []byte(dbRunner.URL)

|

||||

// }, func(data []byte) {

|

||||

// dbClient = db.NewClient()

|

||||

// err := dbClient.Connect(string(data))

|

||||

// Ω(err).ShouldNot(HaveOccurred())

|

||||

// })

|

||||

func SynchronizedBeforeSuite(node1Body interface{}, allNodesBody interface{}, timeout ...float64) bool {

|

||||

globalSuite.SetSynchronizedBeforeSuiteNode(

|

||||

node1Body,

|

||||

allNodesBody,

|

||||

codelocation.New(1),

|

||||

parseTimeout(timeout...),

|

||||

)

|

||||

return true

|

||||

}

|

||||

|

||||

//SynchronizedAfterSuite blocks complement the SynchronizedBeforeSuite blocks in solving the problem of setting up

|

||||

//external singleton resources shared across nodes when running tests in parallel.

|

||||

//

|

||||

//SynchronizedAfterSuite accomplishes this by taking *two* function arguments. The first runs on all nodes. The second runs only on parallel node #1

|

||||

//and *only* after all other nodes have finished and exited. This ensures that node 1, and any resources it is running, remain alive until

|

||||

//all other nodes are finished.

|

||||

//

|

||||

//Both functions have the same signature: either func() or func(done Done) to run asynchronously.

|

||||

//

|

||||

//Here's a pseudo-code example that complements that given in SynchronizedBeforeSuite. Here, SynchronizedAfterSuite is used to tear down the shared database

|

||||

//only after all nodes have finished:

|

||||

//

|

||||

// var _ = SynchronizedAfterSuite(func() {

|

||||

// dbClient.Cleanup()

|

||||

// }, func() {

|

||||

// dbRunner.Stop()

|

||||

// })

|

||||

func SynchronizedAfterSuite(allNodesBody interface{}, node1Body interface{}, timeout ...float64) bool {

|

||||

globalSuite.SetSynchronizedAfterSuiteNode(

|

||||

allNodesBody,

|

||||

node1Body,

|

||||

codelocation.New(1),

|

||||

parseTimeout(timeout...),

|

||||

)

|

||||

return true

|

||||

}

|

||||

|

||||

//BeforeEach blocks are run before It blocks. When multiple BeforeEach blocks are defined in nested

|

||||

//Describe and Context blocks the outermost BeforeEach blocks are run first.

|

||||

//

|

||||

//Like It blocks, BeforeEach blocks can be made asynchronous by providing a body function that accepts

|

||||

//a Done channel

|

||||

func BeforeEach(body interface{}, timeout ...float64) bool {

|

||||

globalSuite.PushBeforeEachNode(body, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

//JustBeforeEach blocks are run before It blocks but *after* all BeforeEach blocks. For more details,

|

||||

//read the [documentation](http://onsi.github.io/ginkgo/#separating_creation_and_configuration_)

|

||||

//

|

||||

//Like It blocks, BeforeEach blocks can be made asynchronous by providing a body function that accepts

|

||||

//a Done channel

|

||||

func JustBeforeEach(body interface{}, timeout ...float64) bool {

|

||||

globalSuite.PushJustBeforeEachNode(body, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

//AfterEach blocks are run after It blocks. When multiple AfterEach blocks are defined in nested

|

||||

//Describe and Context blocks the innermost AfterEach blocks are run first.

|

||||

//

|

||||

//Like It blocks, AfterEach blocks can be made asynchronous by providing a body function that accepts

|

||||

//a Done channel

|

||||

func AfterEach(body interface{}, timeout ...float64) bool {

|

||||

globalSuite.PushAfterEachNode(body, codelocation.New(1), parseTimeout(timeout...))

|

||||

return true

|

||||

}

|

||||

|

||||

func parseTimeout(timeout ...float64) time.Duration {

|

||||

if len(timeout) == 0 {

|

||||

return time.Duration(defaultTimeout * int64(time.Second))

|

||||

} else {

|

||||

return time.Duration(timeout[0] * float64(time.Second))

|

||||

}

|

||||

}

|

||||

32

vendor/github.com/onsi/ginkgo/internal/codelocation/code_location.go

generated

vendored

Normal file

32

vendor/github.com/onsi/ginkgo/internal/codelocation/code_location.go

generated

vendored

Normal file

|

|

@ -0,0 +1,32 @@

|

|||

package codelocation

|

||||

|

||||

import (

|

||||

"regexp"

|

||||

"runtime"

|

||||

"runtime/debug"

|

||||

"strings"

|

||||

|

||||

"github.com/onsi/ginkgo/types"

|

||||

)

|

||||

|

||||

func New(skip int) types.CodeLocation {

|

||||

_, file, line, _ := runtime.Caller(skip + 1)

|

||||

stackTrace := PruneStack(string(debug.Stack()), skip)

|

||||

return types.CodeLocation{FileName: file, LineNumber: line, FullStackTrace: stackTrace}

|

||||

}

|

||||

|

||||

func PruneStack(fullStackTrace string, skip int) string {

|

||||

stack := strings.Split(fullStackTrace, "\n")

|

||||

if len(stack) > 2*(skip+1) {

|

||||

stack = stack[2*(skip+1):]

|

||||

}

|

||||

prunedStack := []string{}

|

||||

re := regexp.MustCompile(`\/ginkgo\/|\/pkg\/testing\/|\/pkg\/runtime\/`)

|

||||

for i := 0; i < len(stack)/2; i++ {

|

||||

if !re.Match([]byte(stack[i*2])) {

|

||||

prunedStack = append(prunedStack, stack[i*2])

|

||||

prunedStack = append(prunedStack, stack[i*2+1])

|

||||

}

|

||||

}

|

||||

return strings.Join(prunedStack, "\n")

|

||||

}

|

||||

13

vendor/github.com/onsi/ginkgo/internal/codelocation/code_location_suite_test.go

generated

vendored

Normal file

13

vendor/github.com/onsi/ginkgo/internal/codelocation/code_location_suite_test.go

generated

vendored

Normal file

|

|

@ -0,0 +1,13 @@

|

|||

package codelocation_test

|

||||

|

||||

import (

|

||||

. "github.com/onsi/ginkgo"

|

||||

. "github.com/onsi/gomega"

|

||||

|

||||

"testing"

|

||||

)

|

||||

|

||||

func TestCodelocation(t *testing.T) {

|

||||

RegisterFailHandler(Fail)

|

||||

RunSpecs(t, "CodeLocation Suite")

|

||||

}

|

||||

79

vendor/github.com/onsi/ginkgo/internal/codelocation/code_location_test.go

generated

vendored

Normal file

79

vendor/github.com/onsi/ginkgo/internal/codelocation/code_location_test.go

generated

vendored

Normal file

|

|

@ -0,0 +1,79 @@

|

|||

package codelocation_test

|

||||

|

||||

import (

|

||||

. "github.com/onsi/ginkgo"

|

||||

"github.com/onsi/ginkgo/internal/codelocation"

|

||||

"github.com/onsi/ginkgo/types"

|

||||

. "github.com/onsi/gomega"

|

||||

"runtime"

|

||||

)

|

||||

|

||||

var _ = Describe("CodeLocation", func() {

|

||||

var (

|

||||

codeLocation types.CodeLocation

|

||||

expectedFileName string

|

||||